In fashionable enterprises, the exponential progress of information means organizational information is distributed throughout a number of codecs, starting from structured knowledge shops resembling knowledge warehouses to multi-format knowledge shops like knowledge lakes. Info is usually redundant and analyzing knowledge requires combining throughout a number of codecs, together with written paperwork, streamed knowledge feeds, audio and video. This makes gathering data for choice making a problem. Workers are unable to rapidly and effectively seek for the data they want, or collate outcomes throughout codecs. A “Information Administration System” (KMS) permits companies to collate this data in a single place, however not essentially to look via it precisely.

In the meantime, ChatGPT has led to a surge in curiosity in leveraging Generative AI (GenAI) to handle this drawback. Customizing Massive Language Fashions (LLMs) is a good way for companies to implement “AI”; they’re invaluable to each companies and their staff to assist contextualize organizational information.

Nonetheless, coaching fashions require large {hardware} sources, important budgets and specialist groups. A lot of expertise distributors supply API-based providers, however there are doubts round safety and transparency, with concerns throughout ethics, person expertise and knowledge privateness.

Open LLMs i.e. fashions whose code and datasets have been shared with the neighborhood, have been a recreation changer in enabling enterprises to adapt LLMs, nonetheless pre-trained LLMs are inclined to carry out poorly on enterprise-specific data searches. Moreover, organizations wish to consider the efficiency of those LLMs with the intention to enhance them over time. These two elements have led to growth of an ecosystem of tooling software program for managing LLM interactions (e.g. Langchain) and LLM evaluations (e.g. Trulens), however this may be far more advanced at an enterprise-level to handle.

The Answer

The Cloudera platform gives enterprise-grade machine studying, and together with Ollama, an open supply LLM localization service, gives a straightforward path to constructing a custom-made KMS with the acquainted ChatGPT model of querying. The interface permits for correct, business-wide, querying that’s fast and straightforward to scale with entry to knowledge units supplied via Cloudera’s platform.

The enterprise context for this KMS could be supplied via Retrieval-Augmented Technology (RAG) of LLMs, to assist contextualize LLMs to a selected area. This enables the responses from a KMS to be particular and avoids producing imprecise responses, known as hallucinations.

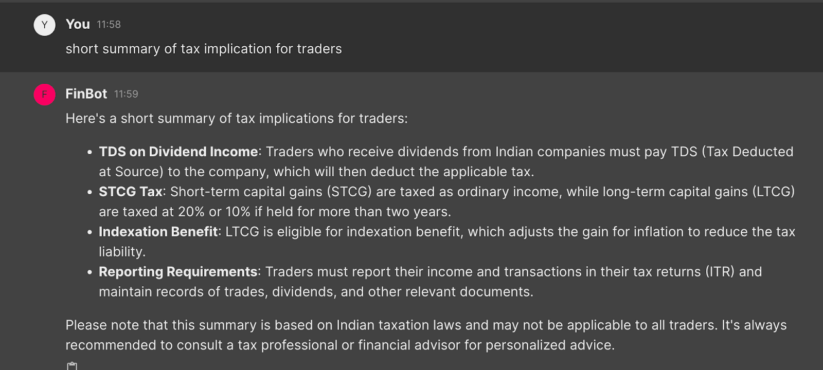

The picture above demonstrates a KMS constructed utilizing the llama3 mannequin from Meta. This utility is contextualized to finance in India. Within the picture, the KMS explains that the abstract relies on Indian Taxation legal guidelines, despite the fact that the person has not explicitly requested for a solution associated to India. This contextualization is feasible due to RAG.

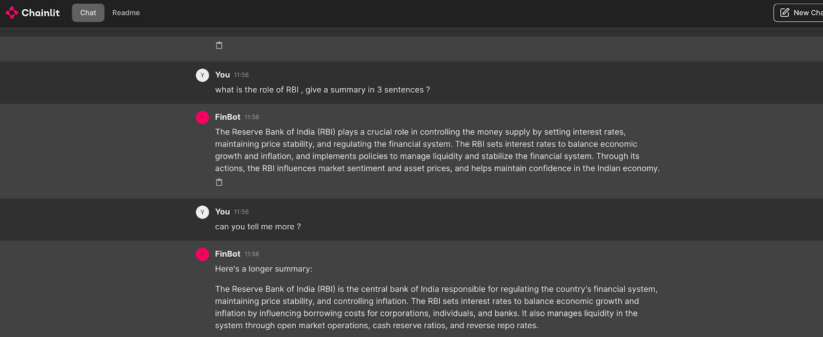

Ollama gives optimization and extensibility to simply arrange personal and self-hosted LLMs, thereby addressing enterprise safety and privateness wants. Builders can write only a few strains of code, after which combine different frameworks within the GenAI ecosystem resembling Langchain, Llama Index for immediate framing, vector databases resembling ChromaDB or Pinecone, analysis frameworks resembling Trulens. GenAI particular frameworks resembling Chainlit additionally enable such purposes to be “sensible” via reminiscence retention between questions.

Within the image above, the appliance is ready to first summarize after which perceive the follow-up query “are you able to inform me extra”, by remembering what was answered earlier.

Nonetheless, the query stays: how will we consider the efficiency of our GenAI utility and management hallucinating responses?

Historically, fashions are measured by evaluating predictions with actuality, additionally known as “floor reality.” For instance if my climate prediction mannequin predicted that it could rain in the present day and it did rain, then a human can consider and say the prediction matched the bottom reality. For GenAI fashions working in personal environments and at-scale, such human evaluations could be not possible.

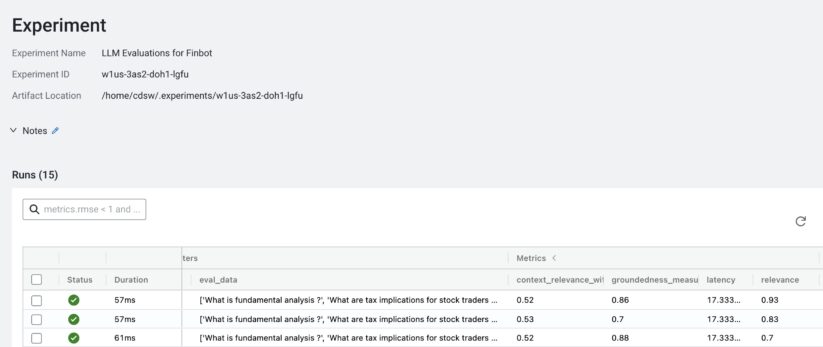

Open supply analysis frameworks, resembling Trulens, present totally different metrics to judge LLMs. Primarily based on the requested query, the GenAI utility is scored on relevance, context and groundedness. Trulens subsequently gives an answer to use metrics with the intention to consider and enhance a KMS.

The image above demonstrates saving the sooner metrics within the Cloudera platform for LLM efficiency analysis

With the Cloudera platform, companies can construct AI purposes hosted by open-source LLMs of their selection. The Cloudera platform additionally gives scalability, permitting progress from proof of idea to deployment for a big number of customers and knowledge units. Democratized AI is supplied via cross-functional person entry, which means sturdy machine studying on hybrid platforms could be accessed securely by many individuals all through the enterprise.

Finally, Ollama and Cloudera present enterprise-grade entry to localized LLM fashions, to scale GenAI purposes and construct sturdy Information Administration programs.

Discover out extra about Cloudera and Ollama on Github, or signal as much as Cloudera’s limited-time, “Quick Begin” bundle right here.