1. Introduction:

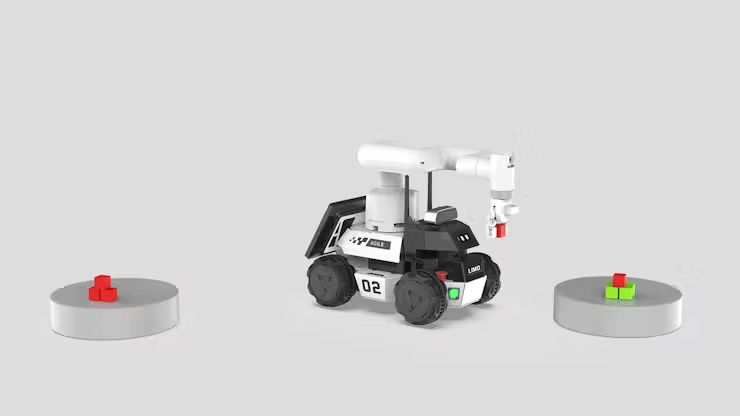

This text primarily introduces the sensible software of LIMO Cobot by Elephant Robotics in a simulated situation. You might have seen earlier posts about LIMO Cobot’s technical instances, A[LINK], B[LINK]. The explanation for writing one other associated article is that the unique testing atmosphere, whereas demonstrating primary performance, typically seems overly idealized and simplified when simulating real-world functions. Due to this fact, we intention to make use of it in a extra operationally constant atmosphere and share a few of the points that arose at the moment.

2. Evaluating the Previous and New Eventualities:

First, let’s take a look at what the previous and new situations are like.

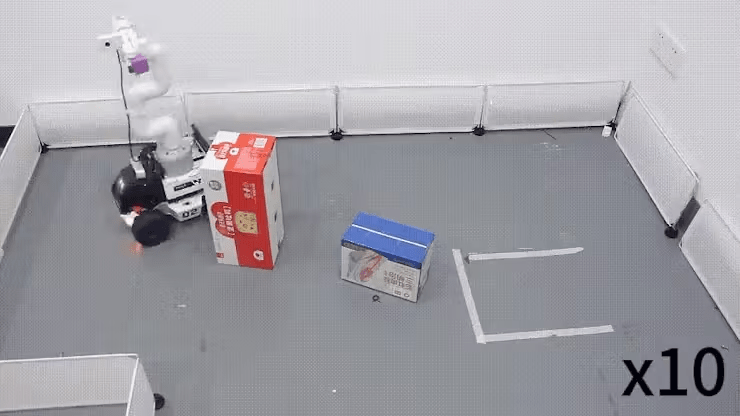

Previous State of affairs: A easy setup with a number of obstacles, comparatively common objects, and a discipline enclosed by limitations, roughly 1.5m*2m in measurement.

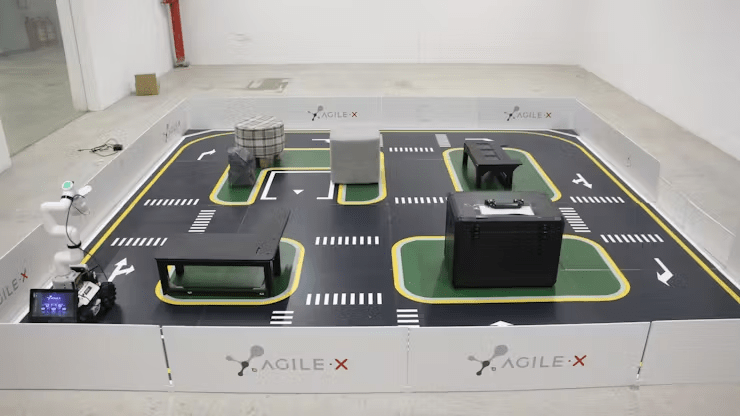

New State of affairs: The brand new situation incorporates a greater variety of obstacles of various shapes, together with a hollowed-out object within the center, simulating an actual atmosphere with street steering markers, parking areas, and extra. The dimensions of the sector is 3m*3m.

The change in atmosphere is critical for testing and demonstrating the comprehensiveness and applicability of our product.

3. Evaluation of Sensible Instances:

Subsequent, let’s briefly introduce the general course of.

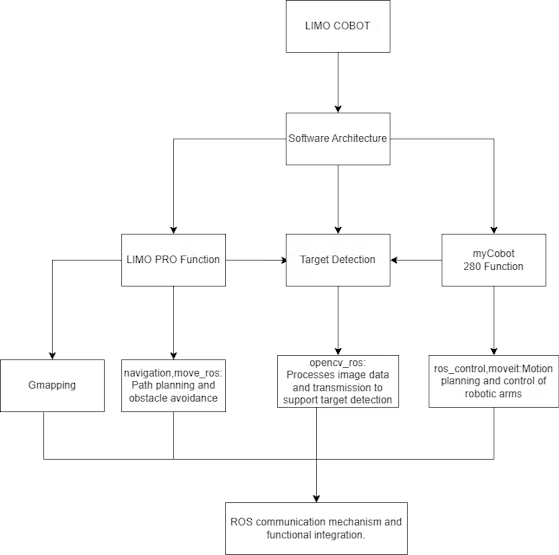

The method is principally divided into three modules: one is the performance of LIMO PRO, the second is machine imaginative and prescient processing, and the third is the performance of the robotic arm. (For a extra detailed introduction, please see the earlier article https://robots-blog.com/2024/05/16/exploring-elephant-robotics-limo-cobot/.)

LIMO PRO is principally chargeable for SLAM mapping, utilizing the gmapping algorithm to map the terrain, navigate, and in the end obtain the operate of fixed-point patrol.

myCobot 280 M5 is primarily chargeable for the duty of greedy objects. A digital camera and a suction pump actuator are put in on the finish of the robotic arm. The digital camera captures the actual scene, and the picture is processed by the OpenCV algorithm to search out the coordinates of the goal object and carry out the greedy operation.

General course of:

1. LIMO performs mapping.⇛

2. Run the fixed-point cruising program.⇛

3. LIMO goes to level A ⇛ myCobot 280 performs the greedy operation ⇒ goes to level B ⇛ myCobot 280 performs the putting operation.

4. ↺ Repeat step 3 till there aren’t any goal objects, then terminate this system.

Subsequent, let’s comply with the sensible execution course of.

Mapping:

First, you should begin the radar by opening a brand new terminal and coming into the next command:

roslaunch limo_bringup limo_start.launch pub_odom_tf:=falseThen, begin the gmapping mapping algorithm by opening one other new terminal and coming into the command:

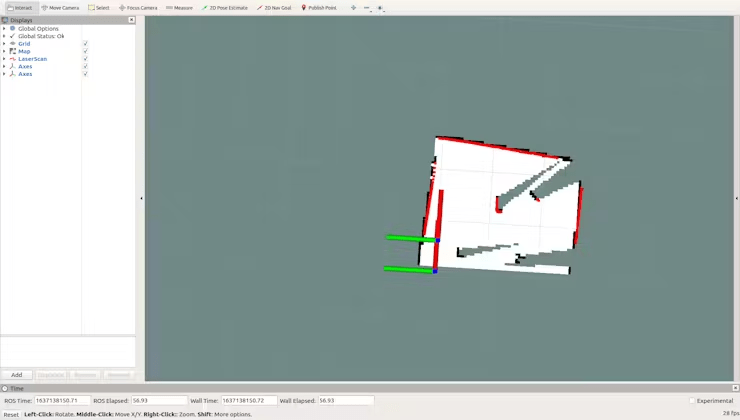

roslaunch limo_bringup limo_gmapping.launchAfter profitable startup, the rviz visualization device will open, and you will notice the interface as proven within the determine.

At this level, you’ll be able to swap the controller to distant management mode to manage the LIMO for mapping.

After establishing the map, you should run the next instructions to avoid wasting the map to a specified listing:

1. Swap to the listing the place you need to save the map. Right here, save the map to `~/agilex_ws/src/limo_ros/limo_bringup/maps/`. Enter the command within the terminal:

cd ~/agilex_ws/src/limo_ros/limo_bringup/maps/2. After switching to `/agilex_ws/limo_bringup/maps`, proceed to enter the command within the terminal:

rosrun map_server map_saver -f map1